I commented recently on the problematic issue of education and indoctrination of the young in religious matters on

Recursivity.

My position has always been that

extremism in religious viewpoints (and note the emphasis) must be primarily related to early religious indoctrination that essentially forbids nuance and careful evaluation of facts, opinions and ideas.

I can just barely imagine that an adult exposed to a rich panoply of ideas and perspectives can come to hold extremist views, and this relates to my concern over issues like school voucher programs that contribute to religious schools.

In fact, when confronted with an extremist, my folk psychology would immediately ask how it is that a person raised in a nurturing and unbiased environment could possibly have transitioned to a perspective of extremism.

In effect, I would want to know who harmed them or what crystallizing moment of injustice caused their change.

I’ve argued previously that it is precisely the exposure to the humanity of others via television that has occurred post-WWII that has changed the way we regard war, aggression and the universality of human rights. Racism, cruelty and mass civilian deaths in wartime are no longer acceptable because we now see others as human, instantaneously, via satellite, and with full translations. Why not accord them the dignity of humanity, spare them collective punishment, and avoid torture when we would want the same for ourselves? Our morals and ethics have improved because of secular education and reason combined with extrinsic factors like technology and social dialog, not through some new form of faith that took hold during that period.

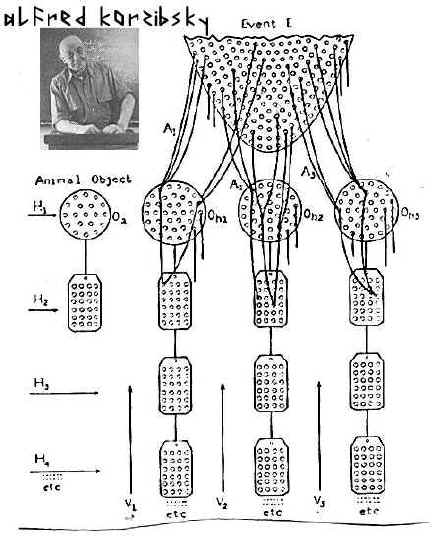

The standard response and challenge to me is to apply a standard of intellectual arbitrariness to the topic and claim that any perspective is still a perspective, and therefore I am as guilty as the religious. There is a curious symmetry with the postmodernist critique of science and reason, here, in the claim that there is no standard for judging the merits of ideas except through a subjective narrative. And my narrative is no better than anyone else's.

But, by this standard, I see faith and reason being leveled to the same standards as intellectual mechanisms, and that I think robs the faithful of their most powerful way of regarding faith: that they have special knowledge that is transcendental to mere reason. Then evolutionary arguments are interesting but irrelevant because they are “mere reason” and the schools are no threats whatsoever in the matter of ideas.

This will make little difference to people like Tim LaHaye, author of the Left Behind series, who writes in The Atlantic this month:

“Until we break the secular educational monopoly that currently expels God, Judeo-Christian moral values and personal accountability from the halls of learning, we will continue to see academic performance decline and the costs of education increase, to the great detriment of millions of young lives.”

His article comes directly after Sam Harris’ musings titled “God Drunk Society” in a collection of short subjects on “The American Idea” by many august writers. LaHaye even slurs together some dubious claims about socialism in early America to justify his claims. Actually, California schools teach a complex set of values that seem to transcend and encompass LaHaye’s desires quite nicely:

"Each teacher shall endeavor to impress upon the minds of the pupils the principles of morality, truth, justice, patriotism, and a true comprehension of the rights, duties, and dignity of American citizenship, and the meaning of equality and human dignity, including the promotion of harmonious relations, kindness toward domestic pets and the humane treatment of living creatures, to teach them to avoid idleness, profanity, and falsehood, and to instruct them in manners and morals and the principles of a free government. (b) Each teacher is also encouraged to create and foster an environment that encourages pupils to realize their full potential and that is free from discriminatory attitudes, practices, events, or activities, in order to prevent acts of hate violence, as defined in subdivision (e) of Section 233."

And this is merely the result of very modern reason.

The Uncanny Valley.

The Uncanny Valley.